II. A Naturalistic Philosophy of Biology This three-part series of blog posts is based on a talk I held at the workshop on "A New Naturalism: Towards a Progressive Theoretical Biology, " which I co-organized with philosophers Dan Brooks and James DiFrisco at the Wissenschaftskolleg zu Berlin in October 2022. This part of the series heavily draws on a paper (available as a preprint here) that is currently under review at Royal Society Open Science. You can find part I here, and part III here. In the first part of this three-part series, I have outlined why I think we urgently need more philosophy in biology today. More specifically, I have argued that we need two kinds of philosophical approaches: on the one hand, a new naturalist philosophy of biology which is concerned with examining the practices, methods, concepts, and theories of our discipline and how they are used to generate scientific knowledge (see this post). This branch of the philosophy of science is relevant for practicing biologists since it boosts their understanding of what is realistically achievable, increases the range of questions they can ask, clarifies what kinds of methods and approaches are most appropriate and promising in a given situation, and reveals how their work is best (and most wisely) contextualized within the big questions about life, human nature, and our place in the universe. On the other hand, we need a philosophical kind of theoretical biology, which operates within the life sciences and consists of philosophical methods that biologists themselves can use to better understand biological concepts, and to solve biological problems (see part III). WHAT IS NATURALIST PHILOSOPHY? Let's talk about naturalist philosophy of biology first. And, no, it does not have anything to do with bird watching or nature documentaries. I don't mean that kind of "naturalist." What distinguishes a naturalist philosophy of biology (from a foundationalist one, let's say) are the following criteria:

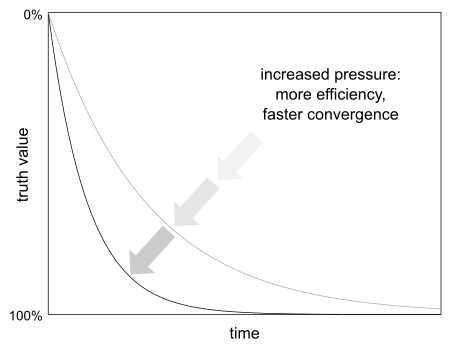

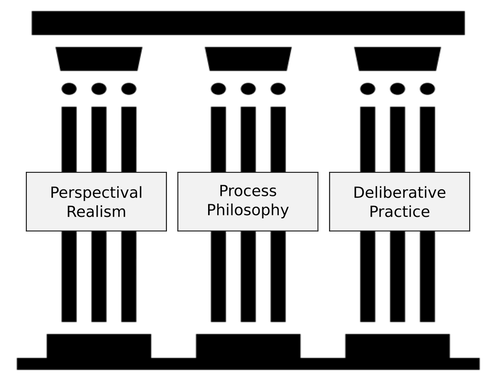

To summarize: naturalistic philosophy of biology attempts to accurately describe and understand how biology is actually done by real-world biologists at this present moment. Yet it must not remain purely descriptive. For it to be useful to biologists, we need an interventionist philosophy of biology that actively shapes the kind of questions we can ask, the kinds of methods we can use, and the kind of explanations we accept as valid answers to our questions. All of these will necessarily change as the field moves on. What we want, therefore, is an adaptive co-evolution of biology and its philosophy, a constant synergy, a dialectic spiral in which one discipline shapes and supports the other, lifting each other to ever higher levels of understanding in the process. The problem is that we are very far from the optimistic vision I have just outlined. In fact, very few scientists these days get any philosophical education at all. This is a serious problem that I attempt to address with my own philosophy courses for researchers. It leads to a situation where many scientists hold very outdated philosophical beliefs, and many unironically proclaim that they do not adhere to any philosophical position at all, or even that "philosophy is dead," as the late physicist Stephen Hawking once remarked. This leads to some serious misconceptions among scientists about how science works and what valid scientific explanations are. These misconceptions are now hindering progress in biology. Furthermore, they underlie the uncritical acceptance of the pernicious cult of productivity that rules supreme in contemporary scientific research. When scientists are aware of their philosophical views, they often profess adherence to something we could call naïve realism. Naïve realism is a form of objectivist realism that consists of a loose and varied assortment of philosophical preconceptions that, although mostly outdated, continue to shape our view of science and its role in society. This view does not amount to a systematic or consistent philosophical doctrine. Instead, naïve realism is a mixed bag of more or less vaguely held convictions, which often clash in contradictions, and leave many problems concerning the scientific method and the knowledge it produces unresolved. Without going into detail, naïve realism usually includes ideas from logical positivism, Popperian falsificationism, and Merton's sociological ethos of science (I've written about this in a lot more detail here, if you're interested). Despite its intuitive appeal, naïve realism is not a naturalistic philosophy of science at all. On the contrary, it is a highly idealized view of how science should work. It paints a deceptively simple picture of a universal scientific method that, when applied properly, leads to an automatic asymptotic approximation of our knowledge of the world to the truth (see figure below). On this view, the process of producing knowledge can be fully formalized as a combination of empirical experimentation and logical inference for hypothesis testing. It leads to ever more accurate, trustworthy, and objective scientific representations of the world. We may not ever reach a complete description of the world, but certainly we're getting closer and closer over time. This view has some counterintuitive consequences. Not only does it imply that we should be able to replace scientists with algorithms some day (since science is seen as a completely formalizable activity), but it also suggests that we can generate more scientific knowledge simply by increasing the productivity of the knowledge-production system: increased pressure, better efficiency, faster convergence. Easy! Unfortunately, due to its (somewhat ironic) detachment from reality, naïve realism leads to all kinds of unintended consequences when applied to the actual process of doing science. One problem is that too much pressure limits creativity and prevents researchers from taking on original or high-risk projects. Another problem is that we give ourselves less and less time to think. We're always rushing into the next project, adopting the next method, generating the next big data set. This way, the research process gets stuck in local optima of the knowledge landscape. Every evolutionary theorist knows that too little variation leads to suboptimal outcomes. This is exactly what is happening in biology today. We are becoming trapped by our own ambitions, our rush to publish new results. How do we get out of this dilemma? What is needed is a less simplistic, less mechanistic approach to science, an approach that reflects the messy reality of limited human beings doing research in an astonishingly complex world that is far beyond our grasp, an approach that focuses on the quality of the knowledge-production process rather than the amount of output it produces. Luckily, such an approach is already available. The challenge is to make it known more widely, not just among philosophers of science but among researchers in the life sciences themselves. This naturalist philosophy of science consists of three main pillars (see figure below):

1. SCIENCE AS PERSPECTIVE The first problem that naïve realism faces is that there simply is no universal scientific method. Science is quite obviously a cultural construct in the sense that it consists of practices which involve the finite cognitive and technological abilities of human beings, firmly embedded in a specific social and historical context. For this reason, scientists use quite different approaches depending on the problem they are trying to solve, on the traditions of their scientific discipline, and on their own educational background and cognitive abilities. This kind of relativist view can be taken to extremes, however. Strong forms of social constructivism claim that science is nothing but social discourse, the knowledge it produces no better than any other way of knowing, like poetry or religion, which are also considered types of social discourse. This strong constructivist position is certainly not naturalistic, and it is just as oversimplified as naïve realism. Therefore, I believe that a naturalistic philosophy of biology must find a middle way between the opposing extremes of social constructivism and naïve realism. An approach that achieves this is perspectival realism. The best way to learn about this philosophy is to read Bill Wimsatt's "Re-engineering Philosophy for Limited Beings." It's not an easy read, but it will change the way you see the world, I can promise you that much. In addition, I recommend Ron Giere's "Scientific Perspectivism" (which will give you a quick overview of the essentials), and Michela Massimi's "Perspectival Realism" (published this year). Finally, Roy Bhaskar's critical realism is worth mentioning as a pioneering branch of the perspectivist family of philosophies described here. I will mainly rely on Wimsatt's excellent book in what follows. Perspectival realism holds that there is an accessible reality, a causal structure of the universe, whose existence is independent of the observer and their effort to understand it. Science provides a collection of methodologies and practices designed for us to gain trustworthy knowledge about the structure of reality, minimizing bias and the danger of self-deception. At the same time, perspectival realism also acknowledges that we cannot step out of our own heads: it is impossible to gain a purely objective “view from nowhere.” Each individual researcher and each society has its unique perspective on the world, and these perspectives matter for science. It needs to be said again at this point that perspectivism is not relativism. A scientific perspective is not just someone's opinion or point of view. This is the difference between what Richard Bernstein has called flabby versus engaged pluralism: each new perspective must be rigorously justified. Wimsatt defines a perspective as an “intriguingly quasi-subjective (or at least observer, technique or technology-relative) cut on the phenomena characteristic of a system." Perspectives may be limited and context-dependent, but they are also grounded in reality. They are not a bug, but a central feature of the scientific approach. Our perspectives are what connects us to the world. It is only through them, by systematically examining their differences and connections, that we can gain any kind of inter-subjective access to reality at all. This is how we produce (scientific) knowledge that is sound, robust, and trustworthy. In fact, it is more robust than what we get from any other way knowing. This is and remains exactly the purpose and societal function of science. Which leads us to a number of powerful principles that arise from a perspectivist-realist approach to science:

Perspectival realism is relevant for a naturalist philosophy of science because it takes the practice of doing science for what it is instead of aiming for some unattainable ideal. At the same time, it acknowledges and justifies the special status of scientific knowledge compared to other ways of knowing. In addition, it refocuses our attention from the product or outcome of the scientific process to the quality of that process itself. How we establish our facts matters. This is why we will be talking about the importance of process thinking for naturalistic philosophy of science next. 2. SCIENCE AS PROCESS The second major criticism that naïve realism must face is that it is excessively focused on research outcomes — science producing immutable facts — thereby neglecting the intricacies and the importance of the process of inquiry. Basically, looking at scientific knowledge only as the product of science is like looking at art in a museum. The product of science is only as good as the process that generates it. Moreover, many perfectly planned and executed research projects fail to meet their targets, but that is often a good thing: scientific progress relies as much on failure as it does on success (see above). Some of the biggest scientific breakthroughs and conceptual revolutions have come from projects that have failed in interesting ways. Think about the unsuccessful attempt to formalize mathematics, which led to Gödel’s Incompleteness Theorem, or the scientific failures to confirm the existence of phlogiston, caloric, and the luminiferous ether, which opened the way for the development of modern chemistry, thermodynamics, and electromagnetism. Adhering too tightly to a predetermined worldview or research plan can prevent us from following up on the kind of surprising new opportunities that are at the core of scientific innovation. For this reason, we should focus more on whether we are doing science the right way, not whether we produce the kinds of results we expected to find. More often than not, the goal in basic science is the journey. First of all, scientific knowledge itself is not fixed. It is not a simple collection of unalterable facts. The edifice of our scientific knowledge is constantly being extended. At the same time, it is in constant need of maintenance and renovation. This process never ends. For all practical purposes, the universe is cognitively inexhaustible. There is always more for us to learn. As finite beings, our knowledge of the world will forever remain incomplete. Besides, what we can know (and also what we want or need to know) changes significantly over time. Our goalposts are constantly shifting. The growth of knowledge may be unstoppable, but it is also at times erratic, improvised, and messy — anything but the straight convergence path of naïve realism depicted in the figure above. Once we realize this, the process of knowledge production becomes an incredibly rich and intricate object of study in itself. The aim and focus of our naturalist philosophy of science must be adjusted accordingly. Naïve realism considers knowledge in an abstract manner (e.g. as "justified true belief") and tries to find universal principles which allow us to establish it beyond any reasonable doubt. Naturalist philosophy of science, in contrast, goes for a more humble (but also much more achievable) target: to understand and assess the quality of actual human research activities, including technological and methodological aspects, but also individual cognitive performance and the social structure of scientific communities. It asks which strategies we — as finite beings, in practice, given our particular circumstances — can and should be using to improve our knowledge of the world. As Philip Kitcher has pointed out, the overall goal of naturalist philosophy is to collect a compendium of locally optimal processes and practices that can be applied to the kinds of problems humans are likely to encounter. This is a much more modest and realistic aim than any quixotic quest for absolute or certain knowledge, but it is still extremely ambitious. Like the expansion of scientific knowledge itself, it is a never-ending process of iterative and recursive improvement. As limited beings, we are condemned to always build on the imperfect basis of what we have already constructed. Just like perspectival realism, a process philosophy of science fosters context-specific strategies that allow us to attain a set of given goals. What is important for our discussion is that different research strategies and practices will be optimal under different circumstances. There is no universally optimal strategy for solving problems — there is no free lunch. What approach to choose will depend on the current state of knowledge and level of technological development, the available human, material, and financial resources, and the scientific (and non-scientific) goals a project attempts to achieve. The right choice of strategy is in itself an empirical question. A naturalist philosophy of science must be based on history and empirical insights into error-prone heuristics that have worked for similar goals and under similar circumstances before. We cannot justify scientific knowledge in a abstract and general way, but we can get better over time at appraising its robustness and value by studying the process of inquiry itself, in all its glorious complexity, with all its historical contingencies and cultural idiosyncrasies. An interesting example of an insight gained from such an inquiry is what Thomas Kuhn called the essential tension between a productive research tradition and risky innovation. In computer science, this has been recast as the strategic relationship between exploration (gathering new information) and exploitation (putting existing information to work). For any realistic research setting, this relationship cannot be determined explicitly as a fixed ratio or a set of general rules. Instead, we need to switch strategy dynamically, based on local criteria and incomplete knowledge. The situation is far from hopeless though, since some of these criteria can be empirically determined. For instance, it pays for an individual researcher, or an entire research community, to explore at the onset of an inquiry. This happens at the beginning of an individual research career, or when a new research field opens up. Over time, as a researcher or field matures and information accumulates, exploration yields diminishing returns. At some point, it is time to switch over to exploitation. This is an entirely rational meta-strategy, inexorably leading people (and research fields) to become more conservative over time, a tendency that has been robustly confirmed by ample empirical evidence. Here, we have an example where the optimal research strategy depends on the process of inquiry itself. A healthy research environment provides scientists with enough flexibility to switch strategy dynamically, depending on circumstances. Unfortunately, our contemporary research system does not work this way. The fixation on short-term performance, assessed purely by measuring research output, has locked the process of inquiry firmly into exploitation mode. Exploration almost never pays off in such a system. It requires too much time, effort, and a willingness to fail. It may be bad for productivity in the short term, but is essential for innovation in the long run. This is a dilemma I have already outlined above. We are getting stuck on local (sub-optimal) peaks of knowledge. Only an empirically grounded understanding of the process of inquiry itself can lead us out of this trap. But this alone is not enough. We also need a better understanding of the social dimension of doing science, which is what we will be discussing next. 3. SCIENCE AS DELIBERATION The third major criticism that naïve realism must face is that it is obsessed with consensus and uniformity. Many people believe that the authority of science stems from unanimity, and is undermined if scientists disagree with each other. Ongoing controversies about climate science or evolutionary biology are good examples of this sentiment. To a naïve realist, the ultimate aim of science is to provide a single unified account — an elusive unified theory of everything — that most accurately represents all of reality. This kind of thinking about science thrives on competition: let the best argument (or theory) prevail. Truth is established by debate, which is won by persuading the majority of experts and stakeholders in a field that some perspective is better than all its competitors. There can only be one factual explanation. Everything else is mere opinion. However, there are good reasons to doubt this view. In fact, uniformity can be bad. This is because all scientific theories are underdetermined by empirical evidence. In other words, there is always an indefinite number of scientific theories able to explain a given set of observed phenomena. For most scientific problems, it is impossible in practice to unambiguously settle on a single best solution based on evidence alone. Even worse: in most situations, we have no way of knowing how many possible theories there actually are. Many alternatives remain unconsidered. Because of all this, the coexistence of competing theories need not be a bad thing. In fact, settling a justified scientific controversy too early may encourage agreement where there is none. It certainly privileges the status quo, which is generally the majority opinion, and it suppresses (and therefore violates) the voices of those who hold a justified minority view that is not easy to dismiss. In summary, too much pressure for unanimity leads to a dictatorship of the majority, and undermines the collective process of discovery within a scientific community. Let us take a closer look at what this process is. Specifically, let us ask which form of information exchange between scientists is most conducive to cultivating and utilizing the collective intelligence of the community. In the face of uncertainty and underdetermination, it is deliberation, not debate which achieves this goal. Deliberation is a form of discussion that is based on dialogue, rather than debate. The main aim of a deliberator is not to win an argument by persuasion, but to gain a comprehensive understanding of all valid perspectives present in the room, and to make the most informed choice possible based on the understanding of those perspectives. What matters most is not an optimal, unanimous outcome of the process, but the quality of the process of deliberation itself, which is greatly enhanced by the presence of non-dismissible minorities. The quality of a scientific theory increases with every challenge it receives. Such challenges can come in the form of empirical tests, or thoughtful and constructive criticism of a theory’s contents. The deliberative process, with its minority positions that provide these challenges, is stifled by too much pressure for a uniform outcome. As long as matters are not settled by evidence and reason, it is better — as a community — to suspend judgement and to let alternative explanations coexist. This allows us to explore. But, like other exploratory processes, deliberation needs time and effort. Deliberative processes cannot (and should not) be rushed. SCIENTIFIC (PSEUDO)CONTROVERSY: AN EXAMPLE OF NATURALIST PHILOSOPHY IN ACTION Scientific controversies provide a powerful example illustrating all three pillars of naturalistic philosophy of science in action. Let us take a quick look at an ongoing debate in evolutionary theory, for instance. It has its historical roots in the reduction of Darwinian evolution to evolutionary genetics, which took place from the 1920s onward. This change of focus away from the organism's struggle for survival towards an evolutionary theory based on the change of gene frequencies in populations is called the Modern Evolutionary Synthesis. In recent decades, a movement has come up that has challenged this purely reductionist approach to evolution. Since this movement was not officially out to overthrow the classical synthesis, but rather to add developmental and ecological aspects to its perspective, it called itself the Extended Evolutionary Synthesis. Since its emergence, there have been several high-profile publications (see here, or here, for example) debating whether such an extension is really necessary or useful or neither. Based on what I have said before about perspectivism and deliberation, you may think that such a diversity of justified positions would be fruitful and conducive to scientific progress in evolutionary biology. Unfortunately, this could not be further from the actual truth. The controversy over the Extended Evolutionary Synthesis is particularly interesting, since the polarization between two dominant positions (which is based on a pseudo-debate, as we shall see) leads to the exclusion of rigorously argued alternative views. The duopoly acts like a monopoly, destroying proper deliberative practice in the process. As Wimsatt points out, the failure to recognize or acknowledge the perspectivist nature of scientific knowledge leads to many misunderstandings in science. Simply put, there are two types of controversies that we may distinguish: the first is a genuine dispute, usually about factual, conceptual, or methodological matters; the second is a territorial conflict, whose causes that are, to an important degree, of a political or sociological nature. The true nature of the latter is often hidden behind a screen of smoke caused by pseudo-debates about matters that could easily be resolved if the participants would only see that they are approaching the same problem (or at least related problems) from a different perspective. Instead of struggling over power and money, the disputants in such controversies could move on by simply learning how to talk to each other across the fence, that is, across their perspectival divide. Clearly, the "controversy" over the Extended Evolutionary Synthesis is a pseudo-debate of this latter kind. One side is interested in the sources of evolutionary variation and its ecological implications, the other about the consequences of natural selection. They are two sides of the same coin. But each community is in direct competition when it comes to funding and influence, which prevents a true dialogue from happening as long as both sides profit from polarization. This is not all we can learn about the importance of perspectivism from this debate, however. Another aspect of the debate is the matter of a synthetic theory for evolution itself. Why extend a synthesis that nobody ever needed in the first place? Evolution is the quintessential process that generates diversity. The sources of variation in evolution are as unpredictable as they are situation-dependent. The idea of developing a synthetic theory for the sources of variation in evolution is patently absurd. The generation of variation among organisms is a highly complex process. What we need to tackle it are as many valid and well justified perspectives as we can get. They should be as consistent as possible with each other, but there is no reason to assume they will ever add up to a general, overarching synthesis. Each evolutionary problem will have its own solution. Some of these will be more or less related to each other, but no more. In fact, if a general account of the sources of variation were possible, then evolution would not be truly open ended or innovative (see Part III). Why is this fundamental issue never even debated or (even better) deliberated? It is because the few people voicing it are rarely heard above the din of the pseudo-debate about unrecognized perspectives. They are drowned out. We do not see the elephant in the room, because we are demolishing the China shop all by ourselves. My assessment of deliberative practice in evolutionary biology is therefore bleak: the process is completely broken, and only very few people even realize it. With better literacy in the naturalistic philosophy of science, this may have all been prevented. The whole pseudo-debate is the consequence of a largely outdated view of science. It is a philosophical problem at heart. SUMMARY: TOWARDS AN ECOLOGICAL VISION FOR SCIENCE Above, I have outlined the three main pillars of a naturalist philosophy of science that is tailored to the needs of practicing researchers in the life sciences and beyond. Its highest aim is to foster and put to good use the collective intelligence of our research communities through proper deliberative process. In order to achieve this, we need research communities that are diverse and whose members are philosophically educated about how to harvest this diversity, when engaged pluralism is a good thing, and when it becomes flabby. Such viable, diverse communities of scientists generate what I would call an “ecological” vision for science, which stands in stark contrast to our current industrial model of doing research. I compare the two approaches in the table below. Note that both models are rough sketches at best, which are highly idealized. They represent very different visions of how research ought to be done — two alternative ethos for science.

I have argued that the naïve realist view of science is not, in fact, realistic at all. In its stead, I have presented a naturalist philosophy of science that adequately takes into account the biases and capabilities of limited human beings, solving problems in a world that will forever exceed our grasp. The ecological research model proposed here is less focused on direct exploitation, and yet, it has the potential to be more productive in the long term than the current industrial system. However, its practical implementation will not be easy, due to the short-term productivity dilemma we have maneuvered ourselves into. Escaping this dilemma requires a deep understanding of the philosophical foundations, as well as the social and cognitive processes that enable and facilitate scientific progress. Identifying and assessing such processes is an empirical problem, which is only beginning to be tackled and understood today. Such empirical investigations must be grounded in a suitable naturalist philosophical framework, and a correspondingly revised ethos of science, This framework must acknowledge the contextual and processual nature of knowledge-production. It needs to focus directly on the quality of this process, rather than being fixated exclusively on the outcome of scientific projects. In this way, naturalist philosophy of science will not only benefit the individual scientist by making her a better researcher, but it will also strive to improve the quality of community-level processes of scientific investigation. It is not merely descriptive, the naturalist philosophy I envisage is changing the way we do science, and is changed by the science it engages with in turn. Apologies: this post has ended up being a little longer than I anticipated. If you're not tired of my ramblings yet, go on to part III, which discusses how we can use naturalist philosophy within biology: a philosophical kind of theoretical biology for practicing biologists to tackle biological problems.

1 Comment

Carlos Martinez

29/10/2022 05:25:56

There is no link for part 3

Reply

Leave a Reply. |

Johannes Jäger

Life beyond dogma! Archives

May 2024

Categories

All

|

RSS Feed

RSS Feed